Frontend finally clicked: a Python-first mental model

Frontend felt like magic I couldn’t debug. If you write Python (backend/ML) and ship confidently server-side but second-guess every React PR, this is for you!

What finally worked wasn’t another library but a better mental model; once I had it, I shipped my personal site in ~72 hours and now build full webapps (auth, CRUD, admin) while reviewing frontend code with confidence.

TL;DR ¶

- Mental model: HTML/CSS is UI state. Interactivity is how the DOM[1] changes - on the client (component state) or on the server (fragment swaps).

- Why it works for Python devs: server-driven tools (FastHTML, HTMX) make interactivity feel like routes → return HTML; no mysterious client state unless you need it.

- How to start: ship one static screen → add one interaction as a server swap → confirm the DOM change in DevTools → only then consider client state.

Wrong paths I tried ¶

- Freelancers: clean PRs I couldn’t review confidently → output without intuition.

- More React tutorials: I could copy; I couldn’t transfer → no problem-solving.

- LLM patching: bugs gone; confusion stayed → unmaintainable code.

- Docs cover-to-cover: I understood concepts in isolation, not in motion → theory without intuition.

Pattern: I kept trying to “learn React harder” when I needed a different entry point.

The mental model that clicked ¶

Modern web development has two concerns. Separate them mentally and everything gets clearer:

1. UI state - What the page looks like (HTML + CSS)

2. Interactivity - How it responds to user actions (DOM manipulation)

--- title: Simple static website displayMode: compact config: theme: 'dark' --- sequenceDiagram autonumber participant U as User participant B as Browser/Client participant S as Server U->>B: Open site B->>S: GET / S-->>B: HTML + CSS Note over B: DOM + CSSOM B->>U: Renders webpage pixels

Every framework, every stack, every approach handles these two things. The difference is where and how they handle interactivity:

- Client-heavy (React, Vue, Svelte): JavaScript runs in the browser, manages state locally, updates the DOM

- Server-driven (Rails, Django, HTMX): server returns HTML fragments, browser swaps them in

Both paths render identical HTML and CSS. Tailwind classes work the same. Where does state live, and how does the DOM change? That simple question makes code reviewable.

Finding your adjacent framework ¶

Learning speed follows familiarity. If you think in Python backend, don't start with React — start with something that feels like Python backend.

Adjacent framework principle: pick tools that match your strongest stack's philosophy, so your intuition transfers.

Why adjacency helps (in practice) ¶

- Same language/runtime → fewer toolchains, faster debugging.

- Server-first render → inspectable HTML fragments in browser DevTools.

- Minimal client state → add client logic only when truly needed.

Examples by backend stack ¶

Following that principle, here would be my choices for different backend stacks.

| Your stack | Frontend framework | Why adjacent | Side effects |

|---|---|---|---|

| Ruby + Rails | Hotwire | extends Rails MVC, Turbo updates parts of the page | keep Rails views/partials |

| Python + FastAPI | FastHTML + HTMX | familiar route/decorator patterns | Python + Starlette end-to-end |

| TS + Node/Express | Remix | uses web standards (forms/actions), SSR[2] by default | easy Node deploys |

| PHP + Laravel | Livewire | keep Laravel controllers/validation/auth | interactions w/o writing JS |

If you're required to use React

- Prefer server components/App Router; add client islands only for truly browser-bound interactions.

- Keep state tiny and local; fetch/mutate on the server; use

useEffectonly for subscriptions/imperative sync.

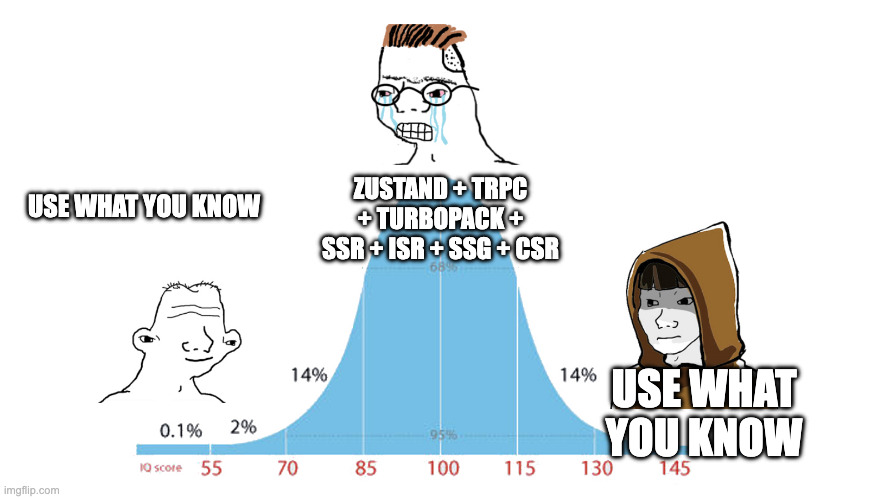

Bottom line: the “best” framework is the one that preserves your mental model while delivering reviewable outputs. Ride your last big win.

My path: Python → FastHTML ¶

I think in FastAPI/Starlette. Frontend clicked when I treated UI state as HTML/Tailwind and interactivity as tiny server-returned fragments. No new brain model.

Let's build a search bar feature!

The model in code (FastHTML + HTMX) ¶

from fasthtml.common import fast_app, Div, Input, Li, Ul

app, rt = fast_app()

@rt("/")

def home():

return Div(

Input(

name="q", # name => sent as query param

placeholder="Search...",

hx_get="/search", # how the request is made

hx_trigger="keyup changed delay:300ms", # declarative debouncing

hx_target="#results", # explicit swap target

hx_swap="innerHTML", # replace inner content of #results

),

Ul(id="results"),

)

@rt("/search")

def search(q: str = ""):

hits = [] if not q else do_search(q)

return (

[Li(h.title) for h in hits] if hits else [Li("No matches")]

) # server returns only items; HTMX replaces inner HTML

The server builds the HTML, the browser inserts it. No local state.

--- title: Server-driven (HTMX) displayMode: compact config: theme: 'dark' --- sequenceDiagram autonumber participant U as User participant B as Browser/Client participant S as Server U->>B: Type "llm" Note over B: Input changes B->>B: wait ~300ms (trigger delay) B->>S: GET /search?q=llm S-->>B: HTML fragment (<li>…</li>) B->>B: Swap fragment B->>U: Renders webpage pixels

Same feature in React (for constrast) ¶

import { useState, useEffect } from "react";

export default function SearchBar() {

// UI state lives in the browser

const [q, setQ] = useState("");

const [hits, setHits] = useState([]);

useEffect(() => {

// empty input -> empty results

if (!q) return setHits([]);

const t = setTimeout(async () => {

const res = await fetch(`/api/search?q=${encodeURIComponent(q)}`);

const data = await res.json();

setHits(data.results);

}, 300);

// cleanup: stop the timer

return () => clearTimeout(t);

}, [q]);

return (

<div>

<input value={q} onChange={e => setQ(e.target.value)} placeholder="Search…" />

<ul>{hits.map(r => <li key={r.id}>{r.title}</li>)}</ul>

</div>

);

}

Before: React scrambled my review loop, I couldn't answer quickly:

- When exactly does

useEffectrun and cleanup? - Which state change triggers which re-render?

- Is my debounce[3] correct across re-renders?

After: the server returns data, the browser derives HTML from client state; and the state change triggers a re-render.

---

title: Client-heavy (React)

displayMode: compact

config:

theme: 'dark'

---

sequenceDiagram

autonumber

participant U as User

participant B as Browser/Client

participant S as Server

U->>B: Type "llm"

Note over B: Input changes

B->>B: setQ("llm")

B->>B: wait ~300ms (trigger delay)

B->>S: GET /search?q=llm

S-->>B: JSON {results:[…]}

B->>B: setHits(results)

B->>B: re-render triggered

B->>U: Renders webpage pixels

Why it worked for me ¶

- Familiar flow: decorators, typed parameters, request → response.

- Server logic: endpoints return HTML fragments instead of JSON, I can inspect them in browser DevTools.

- Reviewable pieces: tiny fragments I can diff; handlers I can test.

Where this fits (and where it doesn’t) ¶

Great fit

- Content-first sites, admin/CRUD, dashboards

- Backend-strong teams

- SEO/linkable routes where server rendering helps

Use client-heavy instead

- Complex local state (rich editors, canvases, design tools)

- Real-time multi-user or offline-first requirements

- SPA-like interactions across most screens

What I built ¶

72 hours → production

- Personal site with responsive layout (desktop + mobile nav)

- Blog (Markdown + frontmatter, code highlighting, footnotes)

- SEO plumbing (meta/OG images, sitemap.xml), deployed to VPS with Cloudflare in front

Impact snapshot

| Metric | FastHTML | React attempt |

|---|---|---|

| Time to ship | ~72h | ~1 week |

| Homepage JS (transferred) | 67kB (26 kB) | 628kB (192kB) |

| Homepage HTML payload | 21kB | 28kB |

LoC impact

While I haven't noticed a similar reduction on my end yet, a company reported a 70% LoC reduction after a React → HTMX migration at DjangoCon 2022.

Measured in Chrome DevTools → Network (Disable cache, Throttling: Off, 'Size' & 'Transferred'[4] columns).

Same Tailwind classes. Same browser output. Different path to get there.

What changed ¶

Before: code review felt like reading tea leaves — staring at useEffect deps and guessing which state change triggered which re-render. Working code, zero confidence.

After: I review by asking two questions: "What's the static structure?" and "What triggers a swap?" If I can't answer both in 10 seconds, the code needs simplification.

The unlock wasn't learning React harder — it was realizing I didn't need to. I could use tools that matched how I already think: routes that return HTML, parameters that get validated, responses I can inspect in DevTools.

LLMs interactions shifted from "fix this for me" to “translate this interaction into fragments/components I can verify”. That's the difference between faith-based programming and deliberate practice.

Try it yourself ¶

- Name the primitives (5 min): pick one feature (e.g. form, toggle, search). Write two bullets: "UI state" (what a screenshot shows) and "Interactivity" (what changes the DOM). If you can’t state both, stop and simplify.

- Pick an adjacent framework (5 min): match your strongest backend so your intuition transfers (see table above)

- Build static first (30 min): ship pure HTML + Tailwind for that feature. No data, no JS. One breakpoint is enough.

- Wire one interaction (20-30 min): return an HTML fragment and swap it (coding assistants an be helpful at this stage)

- Verify the model (10 min): use browser DevTools to confirm the payload (HTML fragment or data) and the DOM change.

What made frontend finally click for you? Stack, project, or mental model — share it. I’ll compile an anonymized “unlock” gallery so others can find their entry point.

I'm on Twitter and GitHub. If there’s interest, I’ll publish a Part 2 walking through my FastHTML/HTMX workflow end-to-end.

DOM (Document Object Model) and its counterpart CSSOM are browser's internal representations of a webpage, which JavaScript can modify to create interactivity. ↩︎

Debounce: wait for a pause before doing something (e.g. when someone is typing, don't fire a search on every keystroke) ↩︎

Transferred = bytes on the wire. Size = decoded/resource size (what the browser parses after decompressing). ↩︎